Following on my previous post, we have spent some time on building an internal Data Lake at Sopra Steria. The infrastructure is functional now and admitting its first users. Much has been said on building successful Data Science teams. Multidisciplinary

Synchronizing an SQL Database to a Data Lake (Change Data Capture at ingest)

The considerations below result from some recent projects at Sopra Steria. The goal: having built a Data Lake, we want to deliver (ingest) in the Raw Zone the data from various sources,including several instances of an Oracle Database. We want

Why Analysts need Data Lakes?

With substantial analytical needs at Sopra Steria Apps, we are looking to expand our Data Science environment. My thoughts go towards a Data Lake architecture, from a concrete angle, having practical requirements and knowing quite precisely what we want. I’ve

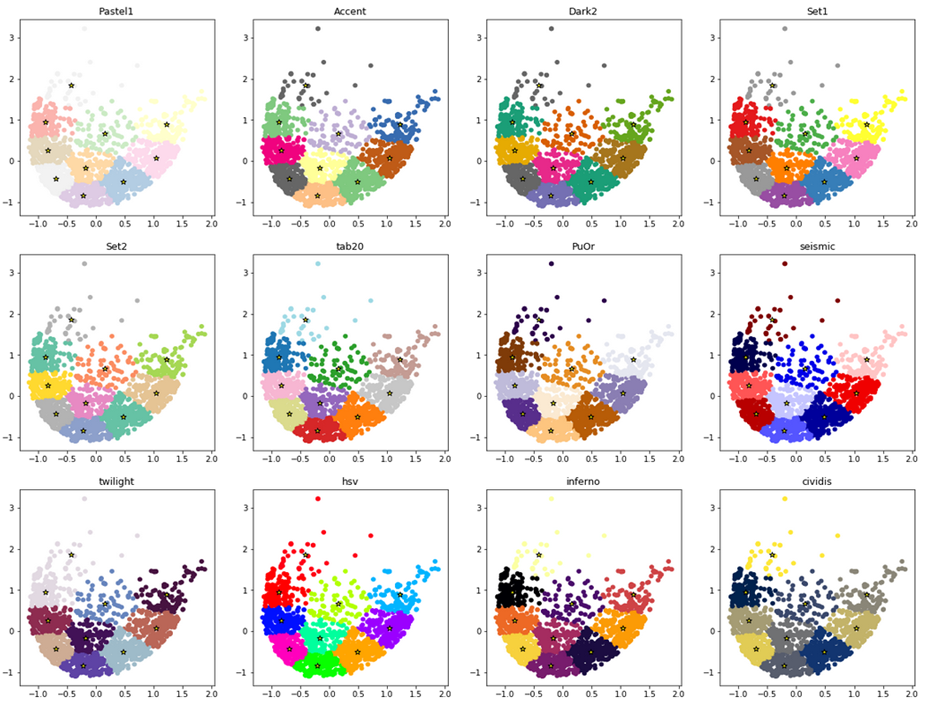

Simple hack to improve data clustering visualizations

Here is how to make your data clusters look pretty in no time (with python and matplotlib), with one-liner code hack. I wanted to visualize in python and matplotlib the data clusters returned by clustering algorithms such as K-means (sklearn.cluster.KMeans)

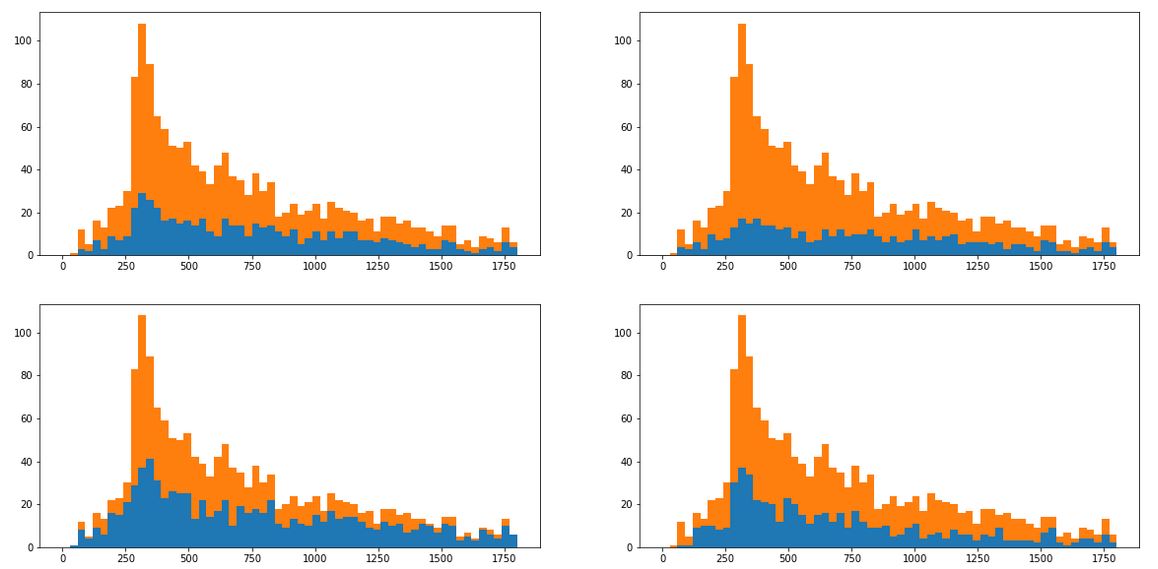

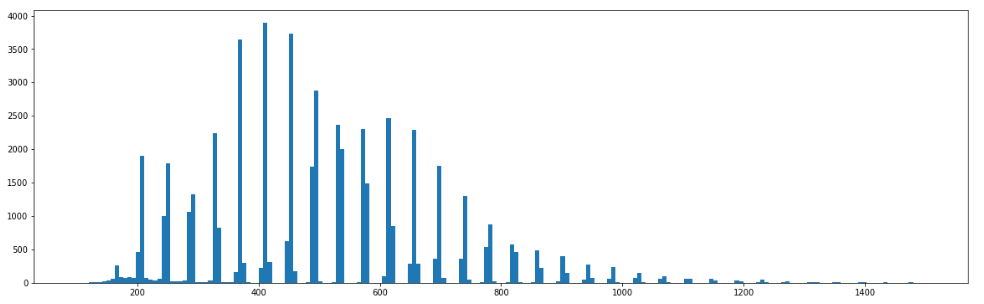

How to isolate data that constitutes a spike in histogram?

We would all love to spot business problems early on, to react before they become painful. You can learn a lot by looking at past problems. Hence, understanding the nature of anomalies in data can bring substantial operational benefits and

Getting started: Apache Spark, PySpark and Jupyter in a Docker container

Apache Spark is the popular distributed computation environment. It is written in Scala, however you can also interface it from Python. For those who want to learn Spark with Python (including students of these BigData classes), here’s an intro to

Building End-to-End Production Machine Learning pipelines

Much of the public discourse in Data Science focuses on model optimization (selection of regressors/classifiers, hyperparameter tuning, model training and improvment of the prediction accuracy). Less material is available on using and deploying these trained Machine Learning models in production.

Audit logging for Amazon Redshift

I lately spent a while configuring and analysing the logs for Amazon Redshift warehouse. I am summarizing the experience here so others can achieve the same faster. Amazon Redshift is the analytical data warehouse platform on the AWS cloud, with

Taking advantage of Machine Learning (ML), without even starting

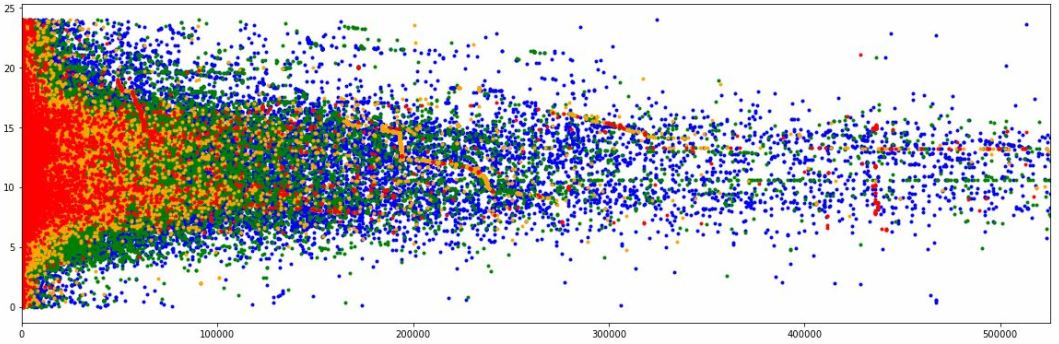

Here is a humorous recent example of what one can achieve with basic data exploration, without even going into any advanced ML techniques. In this recent project I was asked to study response time log of an online service running

4 reasons for building Data Lakes… or not

Data Lakes are repositories where data is ingested and stored in its original form, without much (or any) preprocessing. This is in contrast to traditional data warehouses, where much effort is in the ETL processing, data cleansing and aggregation, to